Optimize costs in Microsoft Azure Log Analytics Workspace while ensuring effective log monitoring, diagnostics, and compliance. Discover tips for reducing Azure Log Analytics costs and enhancing real-time log analysis to detect anomalies, failures, and performance bottlenecks.

In modern cloud environments, storing logs in Microsoft Azure Log Analytics Workspace is crucial for monitoring, diagnostics, and compliance. Real-time log analysis helps detect anomalies, failures, or performance bottlenecks.

Also, many companies and industries require logs to be stored for a specific period to comply with legal and regulatory standards.

Although there are costs associated with storing logs in Azure Log Analytics Workspace, these are significantly outweighed by the benefits it offers in terms of security, compliance, and operational effectiveness. Businesses can achieve a balance between pricing and functionality by putting cost-optimization techniques like sampling, filtering, and retention rules into practice.

💸 However, alongside these benefits comes the challenge of managing the associated costs, making it essential for organizations to adopt cost-effective strategies for storing and maintaining logs.

Although there are various methods to store logs, in this blog, we will focus on Log Analytics Workspace, a service provided by Microsoft Azure for storing and analyzing log data.

For applications instrumented with Azure Application Insights, Log Analytics Workspace allows you to correlate telemetry data, improving debugging and performance tuning.

For instance, consider an organization managing multiple Azure Functions with Application Insights enabled for each one. These Application Insights collect and store various data, including metrics, function logs, and custom application logs, storing them in a Log Analytics Workspace.

Now, imagine the sheer volume of logs generated daily in such a scenario.

Understanding the Costs

Costs in Azure Log Analytics Workspace arise from the following factors:

1. Log Ingestion

- Definition: The volume of data ingested into the workspace.

- Cost Factor: Larger data volumes lead to higher ingestion costs.

- Optimization Tip: Filter and sample logs to store only critical data.

2. Data Retention

- Definition: The duration for which logs are stored within the workspace.

- Default Policy: 31 days are free; costs apply for longer retention periods.

- Optimization Tip: Export old logs to Azure Blob Storage or other archival solutions.

Ways to reduce cost using Log Analytics Workspace

Log Ingestion:

Since Azure Functions ingest all their data into the Log Analytics Workspace, the volume of logs can quickly become overwhelming. To address this, we can configure log retention policies at the Application Insights level, which helps limit the amount of data stored over time and reduces overall ingestion costs.

Additionally, log ingestion can be controlled by defining a sampling rate at the Application Insights level. Sampling selectively captures a subset of logs, significantly reducing the volume of data ingested by the Azure Functions. This approach not only optimizes storage but also lowers costs associated with data ingestion into the Log Analytics Workspace.

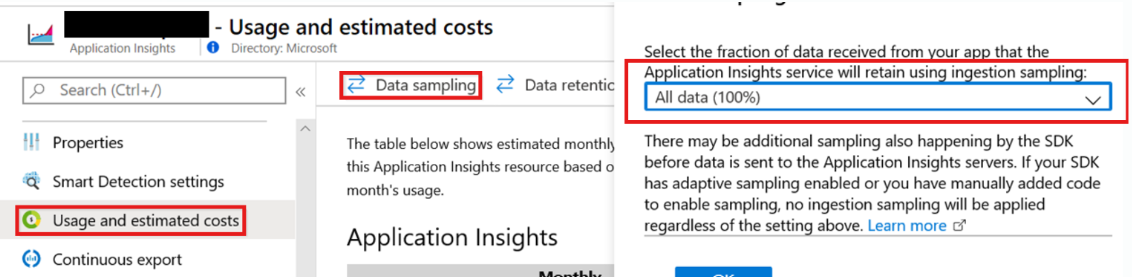

To add Sampling on each Application Insights, use the following method:

- Go to Azure.

- Open your Application Insights resource.

- In the Application Insights pane, go to “Usage and estimated costs” and select “Data Sampling” under the “Monitoring” section.

In this way, we can add the sampling rate in all the respective Application Insights of each Azure Function, so that the overall logs can be reduced.

Also please note that this sampling applies only to the logs and not to the overall metrics of Azure Function like 4xx, 5xx, execution time, etc.

In this way, we can reduce the overall Ingestion on our Log Analytics Workspace which can help in debugging and analysis both.

Data Retention:

By default, Log Analytics retains data for 31 days. Azure allows us to increase the overall retention period but we cannot reduce the retention from 31 days.

Although we’ve implemented log ingestion rate control by sampling the data, during peak traffic periods, but if the function execution doubles or even triples, the amount of logs ingested at the sampled rate can also increase proportionally. This could result in an unexpected rise in overall costs for the Log Analytics service, potentially doubling our expenses.

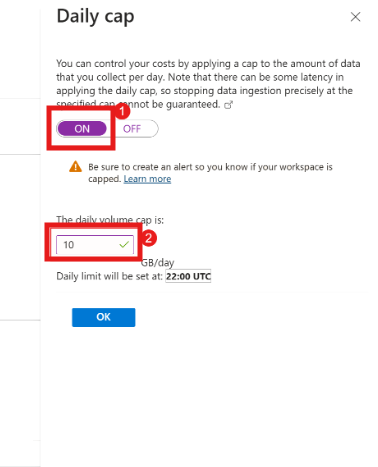

A daily cap on a Log Analytics workspace can help prevent unexpected charges for data ingestion once a specified threshold is reached.

We can define a Daily Cap Limit for our Log Analytics Workspace so that if this limit is breached, the ingestion stops which ultimately keeps our overall cloud costs in control.

Another question then comes up: what would happen if we encountered a problem with our Azure Function and our log ingestion was stopped because the daily limit had been exceeded? In that case, we wouldn’t be able to debug the logs. Therefore, there must be a system in place that notifies the team in advance of any such circumstance or violation of the daily cap limit, enabling them to take the necessary steps to avoid future occurrences.

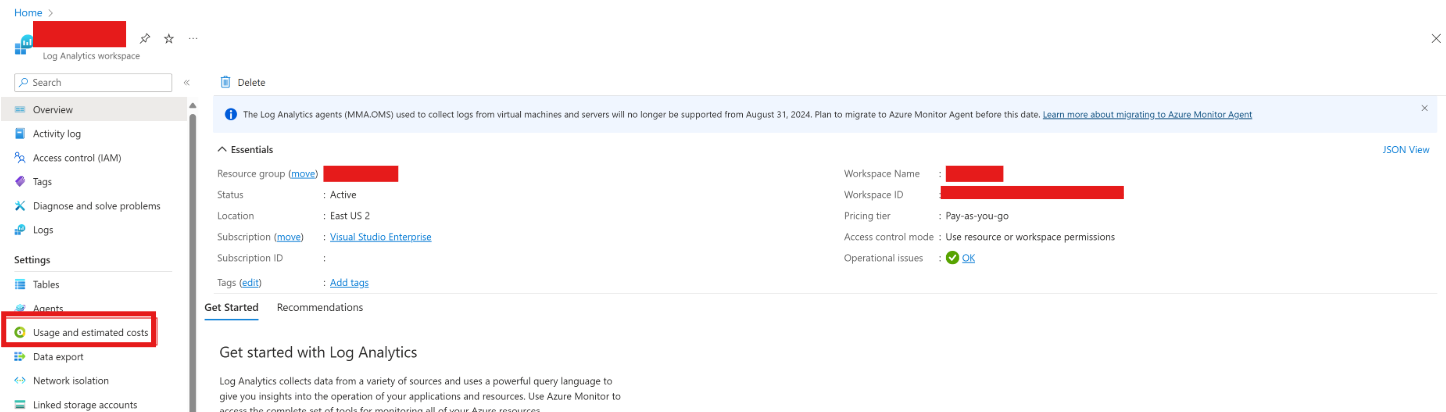

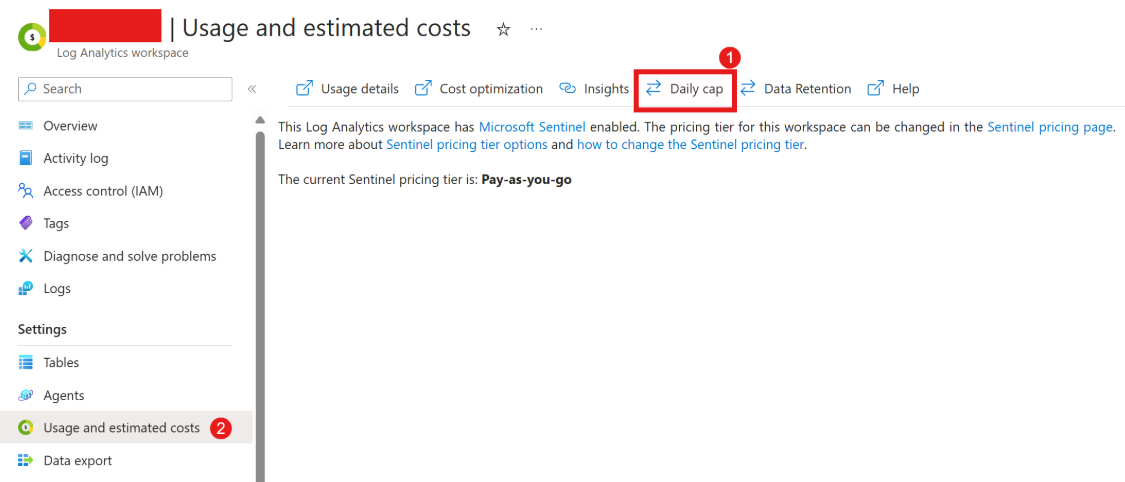

How to add a daily cap limit on Log Analytics Workspace?

- Go to the Azure Portal.

- In the “Usage and estimated costs” section, click on “Daily cap”.You’ll see an option to Enable Daily Cap. Toggle it to On. Set a daily cap by entering your desired limit (in GB) for data ingestion. The system will stop collecting billable log data from tables in the Analytics or Basic table plans once the threshold is reached, for the rest of the 24-hour period.

- In the Log Analytics Workspace pane, click on “Usage and estimated costs” on the left pane.

- After entering the desired cap limit, click Save to apply the changes.

How to Alert when the daily cap is reached?

When the daily cap is reached for a Log Analytics workspace, a banner appears in the Azure portal, and an event is recorded in the Operations table of the workspace. To stay informed, it’s recommended to set up an alert rule that proactively notifies you when this happens.

To receive notifications when the daily cap is reached, create a log search alert rule with the following configuration.

- Go to Azure –> Select Alerts –> Alert Rules.

- Create a new Alert Rule.

- Then Add the Log Analytics Workspace from the list.

- Add the following query:

- Signal type: Log

- Signal name: Custom log search

- Query:

_LogOperation | where Category =~ "Ingestion" | where Detail contains "OverQuota" - Measurement:

- Measure: Table rows

- Aggregation type: Count

- Aggregation granularity: 5 minutes

- Operator: Greater than

- Threshold value: 0

- Frequency of evaluation: 5 minutes

- On the next page, Select or add an action group to notify you when the threshold is exceeded.

- Then Click next, and add the appropriate Alert Name along with the description, and Click on Save.

By creating this log search alert, you will receive notifications immediately when the daily cap on data ingestion is reached in your Log Analytics Workspace. This proactive approach helps you monitor and manage your costs effectively, ensuring that you can take appropriate actions if needed before additional data is ingested.

With the alert in place, you’ll be able to respond quickly, adjusting your sampling rates, retention policies, or workspace settings to prevent additional cost spikes.

Conclusion

Although Azure Log Analytics Workspace is an essential tool for cloud infrastructure monitoring, troubleshooting, and security, it’s crucial to properly manage the costs associated with it. Organizations can make sure they are getting the most out of their log data without running over budget by optimizing log ingestion, retention, query performance, and data export. Businesses can reduce cloud expenses and preserve operational efficiency by implementing effective cost management measures.

Understanding the value of logs and the costs associated with their storage is crucial for leveraging the full potential of Azure while keeping costs in check.

👍 Please share this article if you found it helpful.

Please feel free to share your ideas for improvement with us in the Comment Section.

🤞 Stay tuned for future posts.

Feel free to contact us for more conversations regarding Cloud Computing, DevOps, etc.

🚩 Our Recent Posts

- Step-by-Step Guide for Setting Up a MongoDB Cluster in Linux

- What is Grafana? How to Install and Set Up Grafana for Effective Data Visualization?

- Monitor your MongoDB using Mongo Exporter in Prometheus | in simple steps

- How to Monitor your Kubernetes Cluster using Prometheus Easily – Beginners Guide

- How to setup and monitor Endpoints using Blackbox Exporter in Prometheus using simple Steps?

- How to setup a monitoring for TCP Endpoints using Blackbox Exporter in easy steps?

- How to Set Up Federate Jobs in Prometheus: A Simple Guide to Understanding Federate Jobs

- What is Prometheus? How to setup a Prometheus in easy steps?

- How to setup Node Exporter and Easily use it to monitor your Virtual Machine?

- How to monitor your SQL Databases using Prometheus in simple steps?

I’m a DevOps Engineer with 3 years of experience, passionate about building scalable and automated infrastructure. I write about Kubernetes, cloud automation, cost optimization, and DevOps tooling, aiming to simplify complex concepts with real-world insights. Outside of work, I enjoy exploring new DevOps tools, reading tech blogs, and play badminton.

Subscribe to our Newsletter

Please susbscribe